Vertex AI Gemini Streaming: Real-Time AI Responses Guide

If you’ve been keeping an eye on the AI agent space, you’ve likely listened the buzz around Vertex AI Gemini streaming. It sounds impressive—real-time, multimodal discussions with moo inactivity. But when you’re a designer or a decision-maker attempting to construct the following era of AI tools, the marketing gleam wears off quick.

You need to know: Does it really work? Is the Vertex AI Agent Builder pricing going to blow my budget? And how difficult is it to get a basic voice specialist off the ground?

I've went through a few time burrowing into the stage, testing its boundaries, and sifting through client audits. Here is the unvarnished truth almost building with Google’s flagship agent platform in 2026.

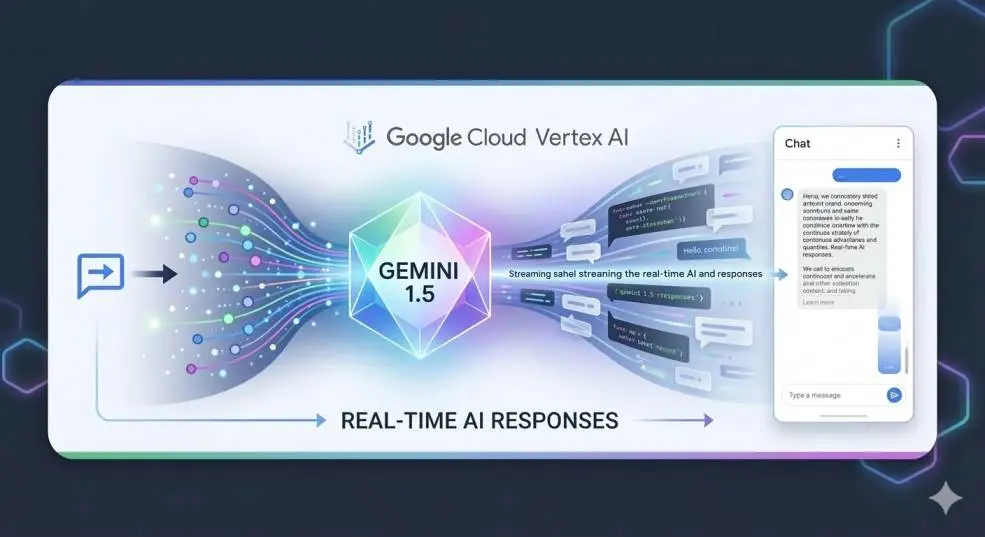

What Is Vertex AI Gemini Streaming?

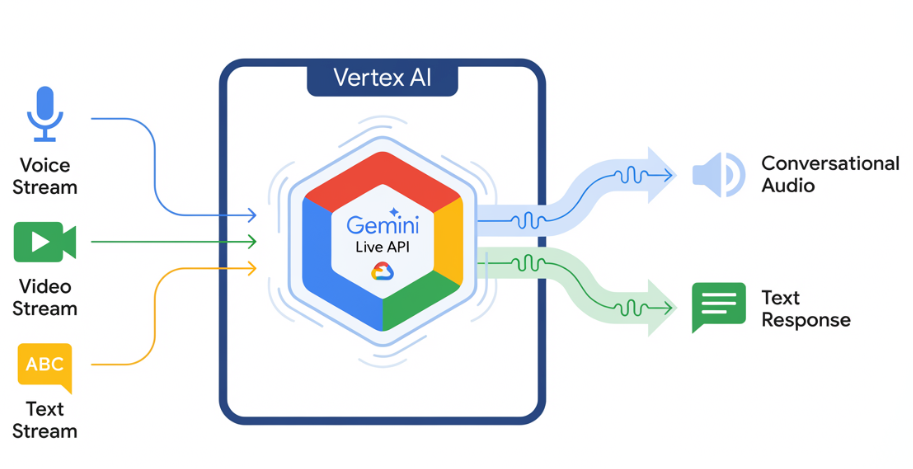

Let’s cut through the jargon. Vertex AI Gemini streaming lets you utilize Gemini models in genuine time. It works in both headings through the Gemini Live API. Streaming offers a liquid discussion.

Related Article: Gemini In Google Keep: A Surprisingly Powerful AI Tool

Spilling permits for a more energetic engagement compared to traditional chats, where you send a provoke and hold up for a settled response. You can send sound or text in chunks, and the demonstrate can react in kind, taking care of interruptions and keeping up setting.

Think of it less like writing to ChatGPT and more like talking to somebody on a walkie-talkie who can wrap up your sentences. This is the engine controlling the new wave of voice operators and real-time colleagues.

Google has coordinates this include into the Vertex AI Agent Builder. Presently, you can base these conversational specialists on your claim data.

The Good, The Bad, and The Ugly: Honest Pros and Cons

Some time recently you commit to building Rag applications with Vertex AI, you require to see the full picture. Based on Gartner Peer Bits of knowledge and hands-on designer input, here is how the stage really performs.

What Works Well (The "Yes, But" Moments)?

1. The "No-Code" Pitch Needs a Caveat. Google markets the drag-and-drop interface intensely. For simple inner FAQs or knowledge base bots, it truly works.

If your objective is to turn up a verification of concept to appear partners, the low-code devices are smooth. You can interface to your information sources and see comes about in minutes.

Yet, the minute you need to do something exterior the box—like customizing the conversation flow past a basic Q&A—you hit a divider. One commentator specified that the low-code organize helps with information recovery. Yet, it regularly needs a "super client" to educate others how to scale it.

2. Integration is a Superpower (In the event that You Live in Google Cloud) If your organization is as of now all-in on Google Cloud, this is a no-brainer.

The local connectors to BigQuery, Cloud Capacity, and other databases are consistent. You do not require to oversee API keys or stress around arrange departure costs. As one client put it, it is a "powerful toolbox for Google Cloud users."

3. Adaptability is Genuine. Google’s framework is a monster. When you send an operator by means of Vertex AI, you do not require to stress about servers crashing beneath stack.

The stage works well for enterprise concurrency. Fair keep in mind the limits. For illustration, you can have 10 concurrent sessions per extend on the Live API.

Where It Gets Frustrating (The Dealbreakers)

1. Estimating is a Labyrinth. Let’s address the elephant in the room: Vertex AI Agent Builder pricing. It is not basic. You aren’t paying a level monthly expense.

You pay for the basic model deduction (Gemini costs), the Operator Motor compute assets, and the information recovery costs.

You Must Also Like: Understanding Google Cloud Machine Learning Engineer Learning Path

I’ve seen new companies get energized, construct a model, and at that point get a stun when they scale since they overlooked to calculate the fetched per inquiry.

It begins at things like $12 per thousand chat inquiries, but that's the base. You require to do the math on your particular utilize case, or you will overspend.

2. Documentation is a Obstruction to Section. Many analysts, including IT experts, say the documentation is frequently "as well complicated" or "not instinctive enough." It’s thick. It accept you as of now get it the Google Cloud ecosystem deeply.

If you’re a solo developer attempting to construct something cool over a end of the week, be arranged to spend hours decoding blunder messages and chasing for code pieces that really work.

The Firebase blog has incredible examples for Respond Local and Kotlin, but finding the rise to for Python or Node.js can feel like a forager hunt. 3. The "See" Trap Numerous cool highlights, like the Gemini Live API for bidirectional sound, are still in "see" or have constrained back on a few platforms.

As of early 2026, while you can utilize the Live API on Android and Flutter, back for iOS and the Web is still checked as "coming before long" in a few documentation. Building a cross-platform app? You’re going to have to oversee a parcel of fallback rationale or hold up for Google to capture up.

Navigating the Agent Builder: Features vs. Reality

When you log into the console (https://console.cloud.google.com/vertex-ai/agent-builder), you are presented with a lot of options. Here is what you actually need to pay attention to.

The "Agent Garden" Paradox

Google provides Agent Garden, a library of pre-built agents and templates. This sounds fantastic. In reality, it’s a mixed bag. The templates are great for learning, but they rarely fit your exact production needs. You’ll likely end up using them as a reference rather than a deployable solution.

The Agent Development Kit (ADK)

If you are serious about building complex agents, you need to use the ADK. This is where the "what features are available as part of Vertex AI Agent Builder" question gets answered. The ADK lets you define tools, manage state, and orchestrate multi-agent systems. It uses the Agent-to-Agent (A2A) protocol.

This is powerful. You can use an agent to sort customer issues. It can pass the case to a specialized order-lookup agent. Then, it sends the information back to the customer service agent for a response. It automates workflows, not conversations.

RAG and Grounding

If you are building RAG applications with Vertex AI, this is where the platform shines. You can connect your data directly to the agent with minimal code. The retrieval works well, and the citations are clear.

Yet, be aware of the "data accuracy" sweet spot. It works best with structured data or clean documentation. If you feed it messy, contradictory information, your agent will reflect that chaos.

Is the Google AI Agent Builder Free? (And Other Pricing Myths)

There is a common search for "Google AI Agent Builder free." Yes, there is a free tier. Google Cloud offers credits for new users, and Vertex AI has a free usage quota for specific services.

But "free" is a starting point, not a business model. Once you exceed the free tier, you pay for:

-

Model Inference: Every token processed by Gemini costs money.

-

Agent Engine: The compute resources that keep your agent running.

-

Storage and Retrieval: Storing your documents in Cloud Storage and querying them.

My advice: Use the free tier to prototype. But before you go live, use the pricing calculator. Estimate your monthly conversations (queries) and multiply.

If you expect millions of interactions, costs will be high. Yet, they may still be competitive. This is especially true when you consider the engineering hours needed to build a similar system from scratch.

Who Should Use Vertex AI (And Who Should Run Away)

To make this guide truly actionable, let's break down the user profiles.

Best Fit: The Enterprise Integrator

You are a mid-to-large company already using Google Cloud. You have a team of backend engineers who know Python and some Java/Kotlin.

Your goal is to automate internal processes—like an HR benefits bot or an IT support ticketing agent. The security, compliance (SOC 2, etc.), and scalability are worth the learning curve.

Good Fit: The Mobile-First Innovator

You are building a mobile app (Android or Flutter) and want a native, real-time voice experience. The Firebase integration for the Live API is exactly what you need. It handles the WebSocket connection and audio streaming so you can focus on the UI.

Bad Fit: The "I Need a Chatbot" Business

If you want a chatbot for your website to answer basic questions, Vertex AI is overkill. You’ll spend hours on IAM roles, service accounts, and quota increases.

You’d be better served by something like Voiceflow or a simple Dialogflow ES agent. The complexity of Vertex AI will slow you down.

Bad Fit: The Budget-Conscious Startup

If you have no idea what your query volume will be, the variable pricing model can be risky. You could build a viral app and then face a massive cloud bill before you’ve monetized a single user.

Final Thoughts

Vertex AI Gemini streaming is a technological marvel. The future is having a low-latency chat with an AI. This AI can see your screen, hear your voice, and access your enterprise data. It allows for smooth and interactive conversations.

But right now, in 2026, it’s a tool for builders with patience and Google Cloud expertise. The documentation presents challenges, the pricing confuses, and the features scatter between "GA" and "Preview."

If you go in with open eyes—knowing you’re putting together many parts instead of buying a final product—you can create something truly powerful.

Remember the golden rule of AI development: start with the problem, not the platform. If Vertex AI is the right tool to solve that problem, it’s worth the climb. If not, don't force it.